A Shifty Email Bug

Main Thread • 5 min read

It was 7:07 am. I woke up to 56 emails, 17 tweets, 9 Slack messages, and 4 telegrams. All of which alerting me my SaaS product had sent 3,625 email messages to 1,544 users overnight. I am Jack's cold sweat. 😅

This is a postmortem. Be warned it's a peek into the mind of a developer mixed with movie references and emojis to light the dark.

While only a few of the user replies were pushy, it was still pretty embarrassing. I pride myself on providing the best service. And here I am spamming my users. How did this happen?

The email users received was a follow-up email. It's sent to users who ran some of the newer Shifts to check how it went and get their feedback so I can improve the Shift.

It goes out weekdays at 3:00 am and to my knowledge had been running fine for the past two years. The code had not been changed since October 2018. But like the sleepy town of Dante's Peak there was something building under the surface, waiting to erupt and I ignored the signs. 🌋

This morning I'm dealing with the aftermath though. I immediately posted a tweet to help build awareness I was looking into it. This slowed the incoming replies.

I also disabled email entirely in all environments by sending everything to the logs using MAIL_DRIVER=log. This may seem like a knee-jerk reaction, but I wasn't sure where the problem originated. At least nothing else would go out until I diagnosed the problem.

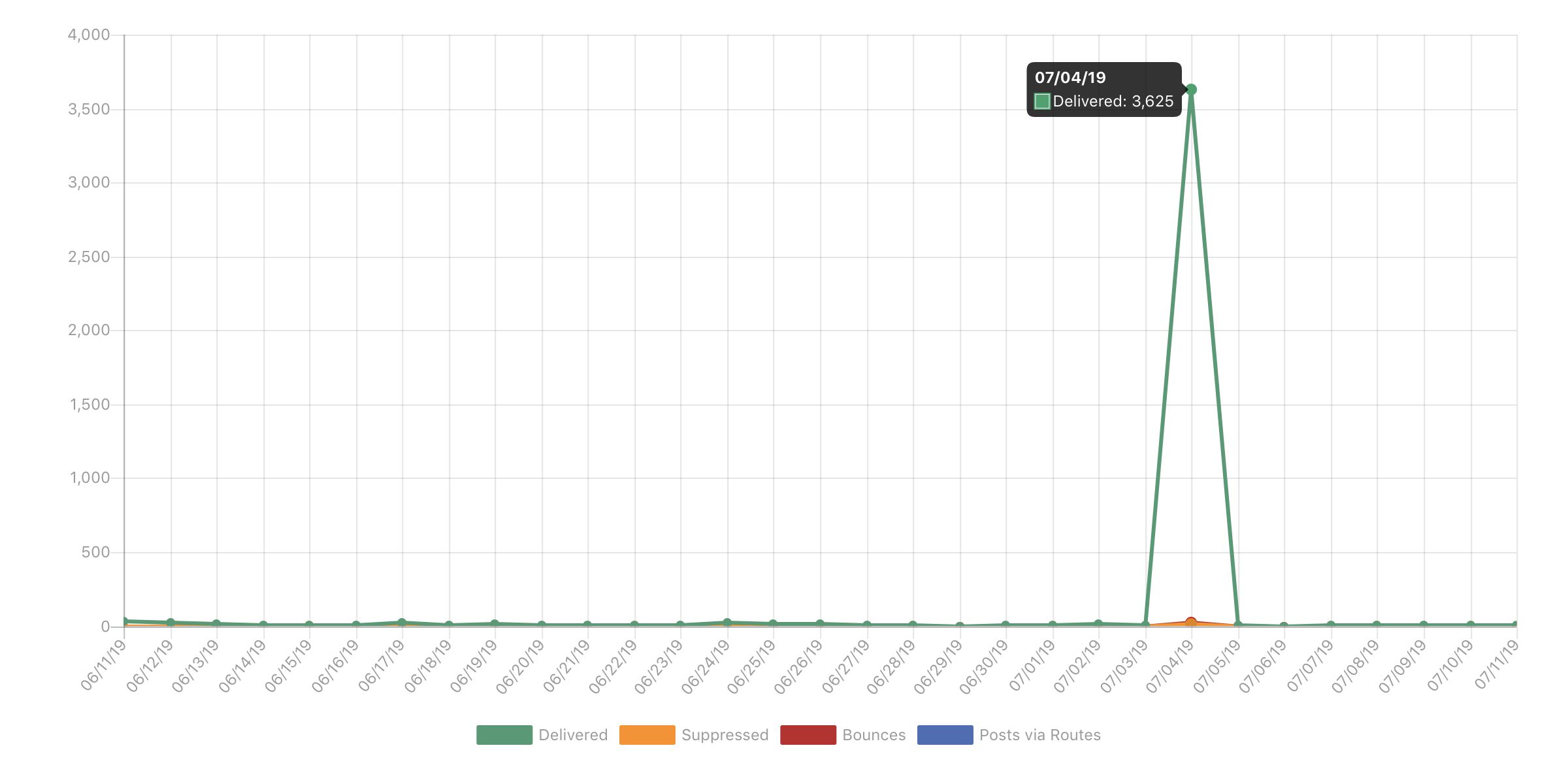

With this patch I switched into investigation mode. I jumped over to Mailgun to see if there were any clues within their logs. I also wanted a better understanding of the damage.

So with a hard swallow I clicked Logs. 🙈

The graph says it all. And while ridiculous, for a brief second I was glad to see it wasn't in the tens of thousands and exceeded my plan limits.

This was still pretty bad. I looked through the logs more closely to try and determine if there was a pattern. I mean did all users get a follow-up email?

It just didn't make sense. This job runs every night. As such the queue should never be this large. I mean 3,625 Shifts would need to be run in a 48 hour period. Inconceivable!

The code is pretty basic. An Eloquent query to collect recent order and send out any follow-up email:

1$orders = Order::where('status', Order::STATUS_FULFILLED) 2 ->where('followup_sent', 0) 3 ->where('created_at', '<', Carbon::yesterday()) 4 ->get(); 5 6foreach ($orders as $order) { 7 if ($this->shouldReceiveReviewEmail($order)) { 8 Mail::to($order->user->email)->send(new ReviewShift($order)); 9 } elseif ($this->needsToRunNextShift($order)) {10 Mail::to($order->user->email)->send(new NextShift($order->product->nextShift()));11 }12 13 $order->followup_sent = 1;14 $order->save();15}So what was up?

- Did I set some environment variable? No.

- Did I make code changes? No.

- When was the last time I upgraded the dependencies? 9 days ago.

- Was there some bug in Laravel? NO! Stop. No. No. No!

- What did I change recently? Honestly nothing.

The only change I made was resetting a user's account who couldn't log in with GitHub. But that was a database change, to an old account.

So what! 🤷♂️

From experience, the issue normally stems from the most recent change - however unlikely it might seem. But it didn't make sense. I wasn't looking at the problem. What's the problem?

I kept thinking what did I do. Not the problem itself. I need to go back to the 5 Whys.

- Why did 3,625 emails go out? Because the follow-up job

- Why did the follow-up job send so many emails? ...

I was only on my second Why. I downloaded a backup of the production database. I ran the same query as the job runs and sure enough found 3,625 records. These went as far back as October. Hmmm, October 2018 was when the code last changed. 💡

I compared the code changes. It was a simple column name change. That couldn't be it. And that would also mean these follow-up emails weren't sending for 9 months. No way man!

At this point I was losing focus. It was the 4th of July. So with email disabled and no more replies coming in, I resolved myself to revisiting it later. 🎆

Experience has also taught me sometimes this is best. I could have burned the entire holiday trying to figure it out and gotten nowhere. Instead, maybe I could walk away now and still enjoy the day while keeping it in the back of my mind.

It was 11:19 pm. I'm laying in bed about to doze off when the NZT hit me. My brain illuminated and it all came together. An email address was null. 🤯

The problem with the user account was because their email address was missing. They must have been queued up to receive a follow-up email. But because their email address was empty it caused an error. This prevented the follow up email task from completing. Night after night after night. I am the smartest man alive!

But could this really be? I received a notification from Sentry a while back about "accessing email property on null". But wouldn't I have received those every weekday morning though.

I went back to the query. Sure enough, the top result was for the corrected user. This means it was the first record in the Eloquent collection and had been blocking the job from completing for months.

I verified against production data no other accounts were missing email. While the application code clearly doesn't expect null, I quickly added an abort(409); for any attempt to create a user account with a null email address. I'll likely drive this out through tests later in the week.

So there it was. I had my 5 Whys:

- Why did 3,625 emails go out? Because the follow-up job.

- Why did the follow-up job send so many emails? Because it was backed up.

- Why was it backed up? Because there was a

nullemail address. - Why was there a null email address? Because their account was pending email verification.

- Why didn't this get caught? Because there was no check.

I reckon some developers would have just disabled email and moved on. But I had to know why. I wanted to provide great service. Even if it was hard. Which brings me to one of my favorite movie quotes:

It's supposed to be hard. If it wasn't hard, everyone would do it. The hard is what makes it great.