I'm honestly not sure if this is all that impressive. It admittedly took longer than I thought. Over the same time, I could've made more as a well-paid software engineer. The service is for a pretty mundane task. I probably could've made 3x the revenue. These are some of the thoughts I'll explore in this post.

This post is massive. Roughly 7500 words. I started writing it a few years ago. Mostly as a journal. Sometimes as an outlet.I've reorganized it as a review of the last 6 years I've spent working on Shift. With some personal reflection mixed in.

I did my best to focus each section on a specific topic. Each has a descriptive title and document link. I also tagged each section for Business Insights and Personal Notes. Hopefully these help you to skip around or come back later to read more.

They say as a solopreneur you never forget the day you pass $1,000,000 in revenue. Last Tuesday was that day for me. It was 6 years in the making.

Let's start at the beginning. It was php[world] 2015. I gave two talks. One being All Aboard for Laravel 5.1. It focused on the changes from Laravel 4.2 to Laravel 5.1 and provided an upgrade path between these versions.

Taylor Otwell, the creator of Laravel, was in attendance. I spoke with him after the talk and asked if he knew of any scripts for upgrading Laravel 4.2 applications. His simple, but memorable reply was, "No. But I'd use it."

As someone who had been around PHP projects, I'd seen such scripts. They existed for Magento patches, as well as other frameworks, like CakePHP. As contractor for web agencies, I thought about scripting portions of the upgrade process for Laravel projects.

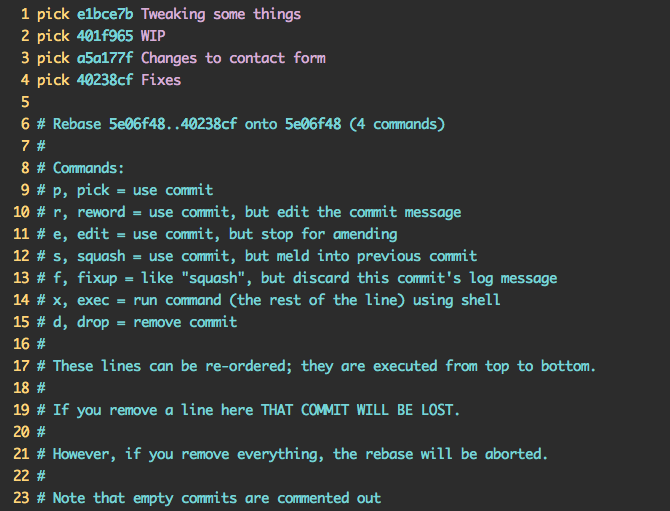

The combination of Taylor's response and my own need gave me a nudge to try and build something. To add to the perfect sequence of events, the conference hosted a hackathon. I placed myself at Taylor's table. We started the evening with a discussion on YAGNI. Ironically, Taylor left the conversation to incorporate Vue into Laravel. I worked on the smaller upgrade path between Laravel 5.0 and the recently released Laravel 5.1.

I hacked together some simple PHP and shell scripts through the night, occasionally interrupting Taylor for clarifications on the Laravel code changes. After all, I was still learning Laravel.

By the end of the night I had a functional prototype to try. However, I didn't have a Laravel 5.0 application to test it on. While it might be hard to believe, neither did Taylor. I went around the room from table to table asking if anyone had a Laravel 5.0 application.

Everyone pointed me back to Taylor, saying, "That's the guy you want to talk to." This foreshadowed some of the challenges I would face down the road. Ultimately Taylor posted on Twitter to help me find some alpha testers.

I continued building the scripts on the flight home and in the following weeks. Once I completed the upgrade from Laravel 5.0 to Laravel 5.1, I move on to upgrading Laravel 4.2 to Laravel 5.0. This way, I could test the entire upgrade path on some of my own apps.

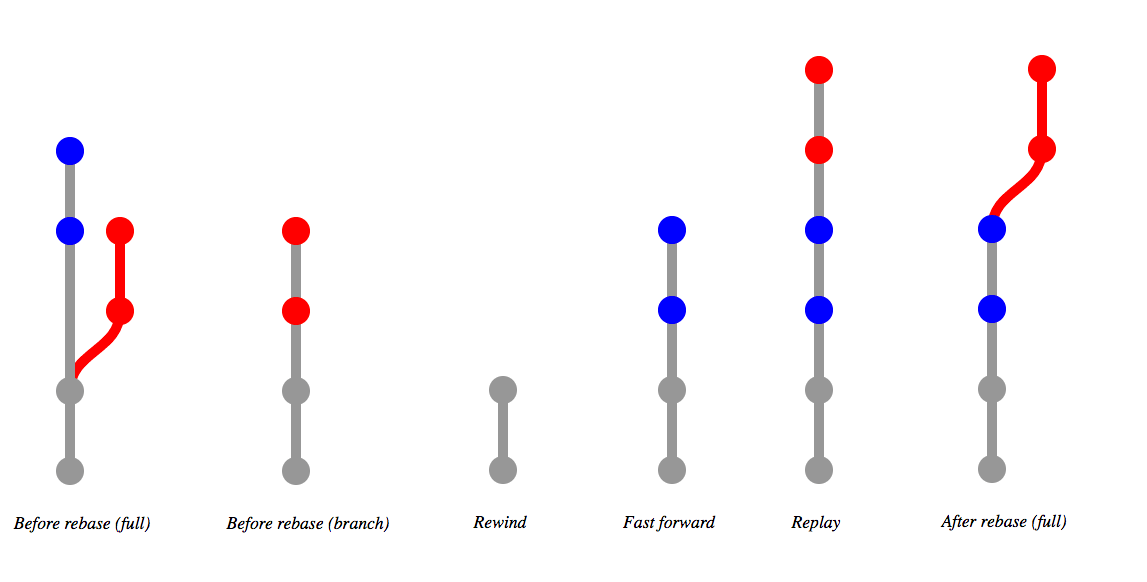

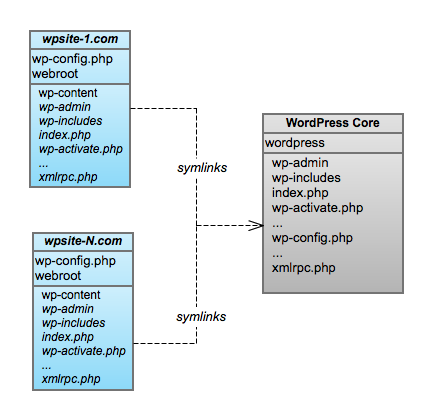

I honestly don't remember why I decided to make Shift a software as a service (SaaS). Taylor's words may have given me confidence. Or maybe after selling iOS applications I believed in paid software. Either way, over the next few weeks I developed a single page site (with design from my buddy Shawn Coots). It authenticated with GitHub and used Stripe Checkout for payment. The website spawned a PHP script in the background. When complete, the script opened a Pull Request with code changes for upgrading your Laravel application.

Even though I decided to sell the product, I priced it ridiculously low. I was attempting to balance what some might expect to be free, with the developer hours it saved. I was also heavily influenced by what I was currently familiar with, the App Store - where software was sold for pennies within a large market place.

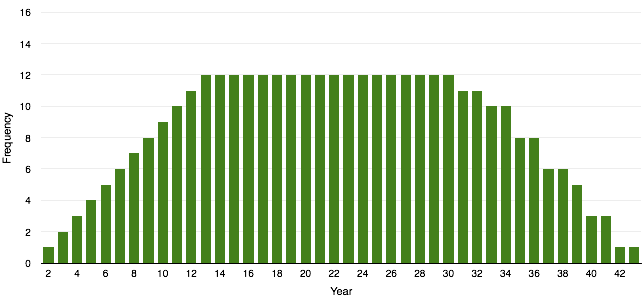

Even in 2015, Laravel was already one of the prominent PHP frameworks. It had nearly 1,000,000 downloads. I did the same naive calculation as any founder. I figured a couple bucks from everyone and I'd reach a $1,000,000. The App Store and Google made such a long-tail approach seem doable.

The upcoming release of Laravel 5.2 gave me an opportunity. It also gave me a hard deadline. Just a few days away it forced me to launch. But I had not built the Laravel 5.2 Shift. I frantically threw it together to be able to launch with the release of Laravel 5.2.

I launched Shift on December 23, 2015. I marketed Shift as easy as 1-2-3: Sign in, pay, and review the PR. I charged $3 for the Laravel 5.1 to 5.2 upgrade. $5 for the Laravel 5.0 to 5.1 upgrade. $7 for the Laravel 4.2 to 5.0 upgrade (big money). Each of these Shifts likely saved developers hours of work.

Over the Christmas holiday I made $80. While that doesn't sound like much, it accounted for about 20 runs. During a holiday week no less. I had no social media presence. Taylor retweeted the Shift release post. So that helped. All things considered, it was a good launch.

Over the next few weeks Shift made another $140. I had taken an idea from nothing to making income in less than a month. I didn't necessarily know it at the time, but Shift had some pretty influential users. Not only did Taylor use it, but so did Jeffrey Way, Freek Van der Herten, and Adam Wathan.

What I did next was not only a personal trait, but also good practice. It's something you'll hear almost every successful founder do. I reached out to my customers. I have no doubt this helped make Shift the success it is today.

I emailed every user who ran a Shift and asked them three simple questions:

- How did Shift do?

- What manual changes did you still have to make?

- How did you find out about Shift?

The answers to the first two weren't always so positive. In fact, I remember Jeffrey Way replying that while he liked the idea, he felt it was rather "buggy". This might have been pretty discouraging. Some may even have taken the service down until they could make it better. But I didn't.

It wasn't that he was wrong. Shift was buggy. I knew I had cut corners. I rushed the development of the Laravel 5.2 Shift. I was still pretty new to Laravel. Even though I'd been writing PHP for over 10 years, I'd been writing Laravel for less than a year.

I wasn't as familiar with all the features of Laravel. All the ways you could craft your application. Even all the changes between versions. I didn't really know the common coding conventions. Definitely not as much as Jeffrey Way. Who taught it all on Laracasts.

No matter what, I reviewed and answered every single reply. I took them as an opportunity not only to improve the automation Shift provided, but also the experience.I still personally manage all support emails. I try to reply to as many as possible. It's a big part of my day. But I will continue to do it as long as I can.

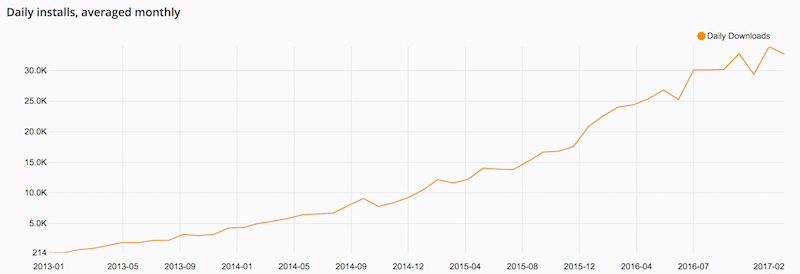

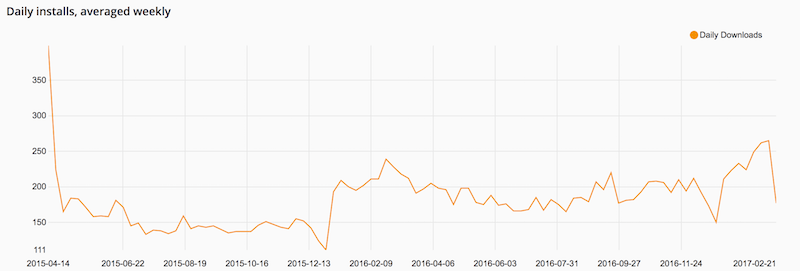

Over the next few months Shift continued to make a few hundred dollars a month. It wasn't growing in revenue, but it was growing its user base. Every month, another few dozen Laravel developers were giving it a try. Nearly all of them coming back to run more (Shift has a 90% retention rate).

Going back to the third question, I realized most of the users were reaching Shift from Twitter. The retweets Taylor provided in the beginning went straight to Shift's target audience.

Taylor's tweets are invaluable. I don't think they are a silver bullet. But, sticking with the metaphor, they do provide extra gunpowder. Anything coming out of the Laravel community would not have the same success without Taylor's backing. Shift included.

In the end, Twitter provided a network effect. When Shift did well (or I won users over during the feedback process), users would tweet about Shift. Those tweets would reach new users, I'd improve Shift, and the cycle would continue. There's a limit. But it definitely helped in the beginning.

Over the next few months I worked to establish my presence in the Laravel community. I also become more familiar with Laravel itself. I had the fortune of being asked to speak at Laracon on that very topic Taylor and I discussed back at the hackathon - Practicing YAGNI.

For me, it was an opportunity to speak at a premier conference. In my hometown no less. On a stage I grew up watching plays and bands. For Shift, it allowed me to reach 400 members of my target audience. The conference was right before the release of Laravel 5.3. After which, I noticed a 10x increase in revenue. It took Shift from a few hundred dollars a month to a few thousand.

Ramen Profitability

Business Insights

In 10 months, I had taken Shift from $0 to $3,000 a month. It was Ramen Profitable. I wasn't familiar with this term until I completed a timeline on Indie Hackers. The amount may not be that impressive. Remember, prices were between $3-$15. So Shift was getting nearly a thousand runs a month. The trajectory was impressive.

Unfortunately, I treated Shift as a side project. Something which brought in seasonal income. I didn't prioritize it. In April 2017 I accepted a 1-year consultant role with Papa Johns. It paid double my current job and 10x Shift's annual revenue. Financially, there was no reason for me to focus on Shift.

That year I wasn't accepted to speak at Laracon. As such I didn't see a pop in revenue or Twitter followers like 2016. My audience grew organically, but I wasn't maintaining any momentum. Sales spiked in March and September for the Laravel releases. Otherwise revenue remained between $3,000-$5,000 a month.

I continued to be active within the Laravel community. Often focusing on PRs and communicating with peers. One of which being Adam Wathan. He was way farther down the road of a solo founder. I guest starred on a few episodes of his Full Stack Radio podcast. Over the years, I've been able to bounce ideas off of him. I'll absolutely credit himfor recommending tiered pricing.

Between 2016 and 2018, Laravel versions 5.4, 5.5, 5.6, and 5.7 were released. This added 4 additional SKUs to Shift's catalog. I had increased the base price to $9, and staggered each version by $2. So the most recent Laravel 5.7 Shift was $9, and the Laravel 5.0 Shift was $21.

In April 2018, I renewed my contract with Papa Johns for 6 months. The growing catalog was increasing revenue for Shift. Yet it was still no where near my salary. Nor was its trajectory indicative of passing that any time soon. The combined income from the contract and Shift also put me on track to financial freedom.

Fortunately, I was accepted to speak at Laracon 2018. This time, I felt comfortable incorporating Shift. I gave a talk called Laravel by the Numbers. It shared some of the insights into developing Laravel applications. Ideally guiding developer decisions. It was well received. To this day, developers continue to thank me for the insight it provided.

Laravel 5.8 was released a few weeks after the conference. Shift had a 30% increase in sales and hit a monthly revenue high of $6,315. When my contract ended in October 2018, I finally decided to go "full-time" on Shift.

I titled this section as a missed opportunity. Looking back I could have taken another path - I could have gone full-time much earlier. I had grown to ramen profitability in less than a year. For the next two years I let revenue stagnant. I completely lost the momentum.

Keeping the momentum, Shift may have reached the same level by the next Laravel release in March 2017, instead of October 2018. Also two years earlier. I don't think it would have taken much - a coordinated marketing effort on Twitter and leveraging some existing relationships. I don't know. I do know I lost momentum.

I don't have any regrets. But from a business perspective, this was a misstep. Going full-time on Shift wasn't my mindset at the time. I still didn't think it was anything. I am not a business guru. I am not a pushy salesperson. I am a decisive person. I am a problem solver. The truth is, I stumbled into a niche market. Nothing more. I just liked writing code automation. I never really had a business plan.

Going full-time on Shift

Personal Notes

With the recent growth, I spent more time on Shift. It still wasn't making more than my day job. But Shift also didn't have its usual dip post-release. Revenue stayed above $6,000 per month heading into 2019. Since I managed to save money during my contract extension, I felt comfortable giving Shift a year. If I dipped into that savings, I would get another job.

This time, I made a plan. I would make Shift a true SaaS by introducing a subscription service. While the bi-annual release cycle created recurring revenue, it wasn't stable. Subscriptions allowed users to lock in the cost of upgrading. It also allowed me to forecast revenue. This way I could better measure growth and feel more comfortable with my decision to go full-time.

As with any launch, there were a few early adopters. But it wasn't a no-brainer purchase that I (again) naively thought it would be. Looking back I think there were two reasons for this.

First, Laravel wasn't necessarily releasing that many new features at the time. As I would learn, in the real world, not everyone cares about running the latest version right away. Second, Laravel adopted long term support (LTS). Certain versions of the framework were guaranteed support for 2 years. Further giving developers comfort to remaining on older versions of Laravel.

The combination likely prevented the subscription model from being as appealing at the time. I pivoted. I attempted to provide Shift for additional platforms. The most obvious was PHP itself. After all, if upgrading Laravel made thousands of dollars a month, upgrades for the larger PHP community should achieve an equal or higher amount.

If so, I could grow monthly revenue above $10,000 a month ($120,000/year). That would be a competitive developer salary. Although still not as much as I made consulting. Unfortunately, the PHP Shifts fell even more flat than the subscriptions. To this day, the PHP Shifts account for less than 1% of all Shifts run.

This goes back to the night of the hackathon. I didn't see it at the time, but there was a division between PHP and Laravel. This has likely been exacerbated by the rise in popularity of Laravel. We have unfortunately all been witness to the attacks on Twitter and Reddit. I myself have been caught in them a few times.

The bottom line (no pun) is the PHP community doesn't see the value of Shift. I think the Laravel community is more accepting of paid products and services. While upgrading PHP versions should appeal to a larger audience, that audience isn't as willing to pay. They would rather do it themselves. This, of course, goes for all developers. Anyone really. Some people don't see their time as a cost. Or, they just like doing it themselves.

I still make some PHP Shifts. Mostly for my own use. Or for code used by Laravel, such as PHPUnit. These Shifts are almost always free. I get asked about Shifts for upgrading PHP versions from time to time. But not enough to try again.

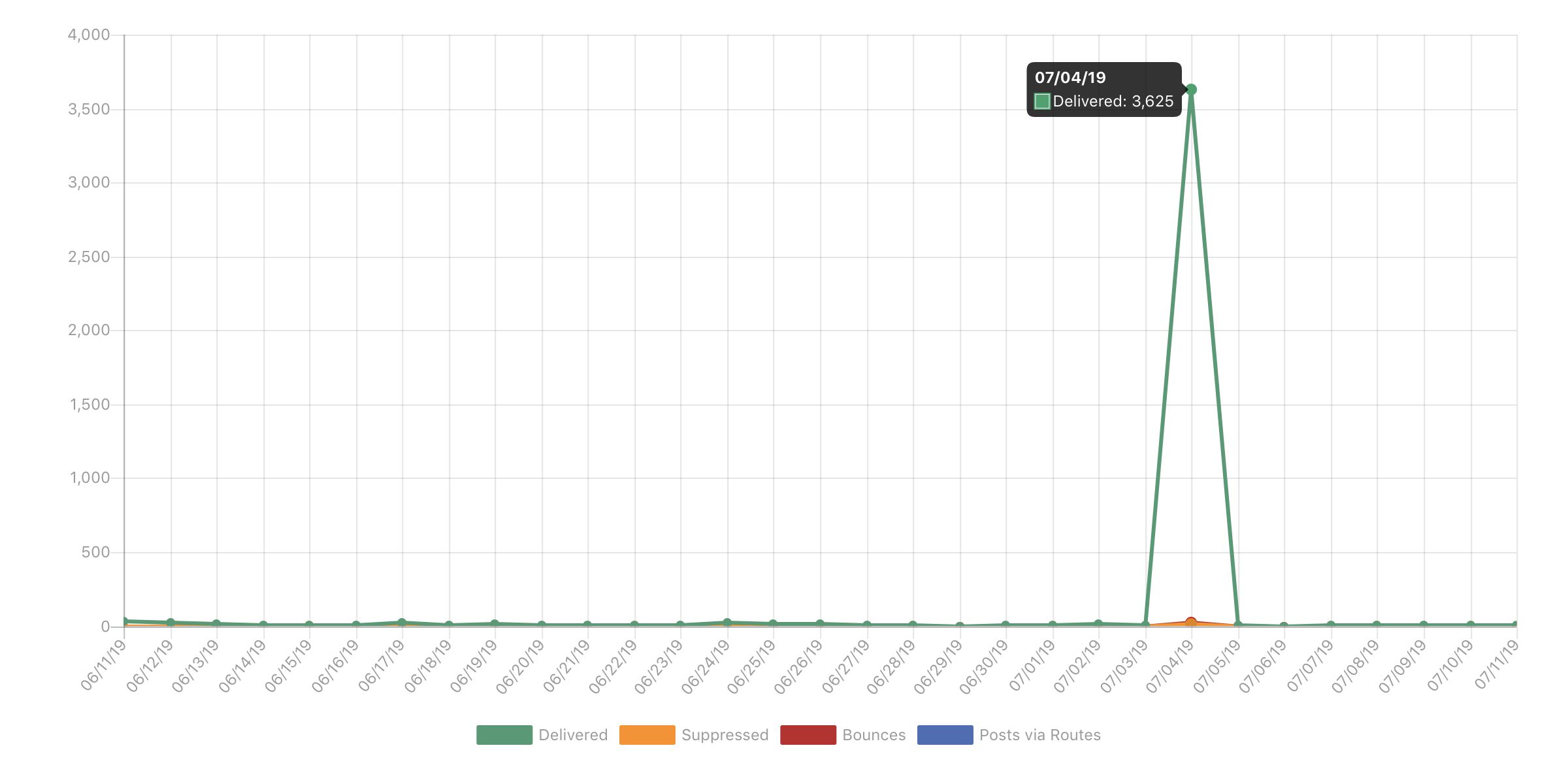

Shift continued to make between $6,000-$8,000 per month. In August 2019, I was accepted to speak at Laracon again. I gave a similar talk before to review the "Shifty bits" within Laravel. This time, Shift had a 50% increase in revenue.

When Laravel 6.0 was released in September, Shift hit a new monthly revenue high of $20,312. Based on the growth in August, I do think Laracon continued to help. Laravel 6.0 was also an LTS version. Likely triggering older applications to upgrade. This finally made a subscription appealing. So a portion of the increase was the influx of upfront subscription payments.

A majority of sales were still from the pay-as-you-go Shifts. There was an 80% increase in the number of Shifts run for the month. Yet they didn't make up 80% of the monthly revenue. Subscriptions generated nearly 50% of the revenue. Something didn't add up.

The release and the continued growth of Shift helped break through to the next revenue milestone (5 digits). Revenue has remained above $10,000 per month since August of 2019.

Moving to tiered pricing

Business Insights

When I first launched Shift I sold each Laravel version upgrade for a few dollars. I was used to this model from selling iOS applications for $0.99. I was uncomfortable selling software. I mitigated this with a low price. I also justified it by thinking everyone would use it. Reaching a million in revenue is pretty easy when your multiplier is "everyone". It's also pretty naive.

First, not everyone is going to use your product. Some people aren't willing to pay. Some people won't believe in it. Some people just like doing it themselves.

Second, "everyone" is not really everyone. Your product has a market. That market has customers. In the case of Shift, this means Laravel developers. Technically speaking, it means Laravel projects. How many Laravel projects are there? 1,000,000, 500,000, 100,000? Stats like download counts lead you to inflate this number. In reality, once you filter down projects running old version of Laravel in production, this number becomes much smaller.

Finally, a low cost implies a low value. It's just the world we live in. When something is a few dollars, it's likely to be perceived as not very good or throw away. Sure, some will try it because of the low price. But others may not try it. A low price may hurt your value. You're also probably not attracting the type of customers you want.

It took me a long time to realize this. Over 3 years. Even then, I wasn't fully comfortable raising prices. It still took some pushing. I continually received feedback from users literally telling me "charge more". Marcel Pociot would actually buy a Shift, then PayPal me an additional "donation". I still receive emails from time to time saying charge more. But now I also receive replies from the abandoned cart emails saying Shift is "too expensive". It seems I'm getting closer to the right price.

I did raise prices over the years. Although, aside from the initial launch pricing, I only raised it after a new Laravel release. This is still how I do it today. The problem in the beginning was I'd only raise the price of the older Shifts by a few dollars.

I used staggered pricing. The oldest version would be the highest price. The next version would be a few dollars less, and the next a few dollars less. Until you got down to the latest version at the lowest price. Usually $9.

I did this to incentivize the customer to continue their upgrade to the latest version. Using the descending price as a sort of dangling carrot. I still think it's a good idea. Maybe just poorly executed.

Adam Wathan helped made me realize this in early 2019. He pointed out that my staggered pricing lacked structure. As such it was unpredictable. Customers might not be able to forecast the cost of upgrading using Shift into their budget.

He suggested using tiered pricing. Older versions were all one price and the new version another price. In the end, I actually created three tiers. I aligned them with Laravel's Support Policy. Any unsupported version of Laravel was in the highest tier ($29). Supported versions were in the middle tier ($19). The latest version was in the lowest tier ($9).

Tiered pricing did two things. First, it anchored price between the versions. It was more clear to see the pricing path if you're running older versions. In turn, this more clearly demonstrated the incentive to stay current and pay the lowest price.

Second, and most importantly, it allowed me to raise the prices for Shifts across-the-board. Under staggered pricing, upgrading a few versions might have a cost delta of just $6. With tiered pricing, the cost delta might be $30. That's a 5x increase in revenue for a common use case.

Moving to tiered pricing was the most impactful change for Shift. It allowed me to hit my revenue goals for 2019 and continue staying full-time on Shift. It also makes Shift revenue less seasonal. As those upgrading outside of the release are usually purchasing Shifts at the higher price tier.

Diversification within a single market

Business Insights

Shift still had not surpassed the salary I was making as a consultant. Yet I could forecast it doing so in 2020. So I didn't set any conditions. I was comfortable working on Shift full-time until its decline.

By this point the Laravel Shifts were really refined. I had automated the upgrade for 9 Laravel versions. The code had evolved from copied scripts to core classes. This foundation made it easy to develop new Shifts quickly. So I wanted to try to tap into additional markets again.

This was mostly for diversification. I had seen multiple PHP frameworks come and go in my career. It seemed prudent not to have all Shift's revenue tied to one market. If I could reach another market as I did with Laravel, I might be able to double revenue.

PHP would be the obvious choice. Releases were becoming more frequent. There was a new major version release with PHP 8. Unfortunately, past experience left me very skeptic. I didn't believe there was any potential.

I considered JavaScript. I chatted with a few prominent members of that community. Most seemed to have the same sentiment I had about PHP. The community is split and the DIY mentality prevalent. There were just too many frameworks and too many packages with all sorts of little tools and scripts available. It would have been an uphill battle.

I wanted something like Laravel. That didn't just mean a full-stack web framework. It also meant a community that valued services. A willingness to pay for services. These are deeply ingrained into the Laravel ecosystem. From the beginning, Laravel had paid services like Forge and Laracasts. Anyone within the Laravel community learns the value of these services. I believe that makes them more open to other services within the community.

.Net, Java, and Ruby satisfied these conditions. They all had full-stack frameworks and communities where paid services were common. I had the most familiarity with Rails. I sent a quick email to DHH asking if he felt a service like Shift would be accepted within the community. He replied with some stats about the number of Rails sites in production and recognized the pain of upgrading.

Similar to Taylor's feedback, this was all I needed to get started. Like Laravel, I would start by building a few Shifts to upgrade between the recent versions of Rails. However, I hadn't used Rails since version 3. I realized I wasn't as familiar with it as I used to be. I also didn't have a presence within the community. There may be a small section of Laravel developers who were also Rails developers. I doubted this would be enough to break into the Rails market.

I decided to try and find a partner. Someone to not only help me complete the Rails Shifts, but also help with marketing. This proved a bit challenging. It reminded me of those times people asked me to build them an iOS app with some random idea they had. But now I was on the other side. It didn't feel right.

Time started to work against me. The next Laravel release was on the horizon. I was going to speak at Laracon again. I just didn't have the time to manage the new venture. I plan to revisit it. But it needs to feel right. Ideally finding a partner more organically.

I decided to diversify within the Laravel market. Upgrading was only one piece of maintaining a project. Maintenance, in general, is much broader. It includes things like writing tests, setting up CI, and code refactoring.

The current Shifts only addressed upgrading. All the Laracon talks I had given before were on these other topics. I had even written books and made courses on these topics. But I wasn't bring them back into Shift.

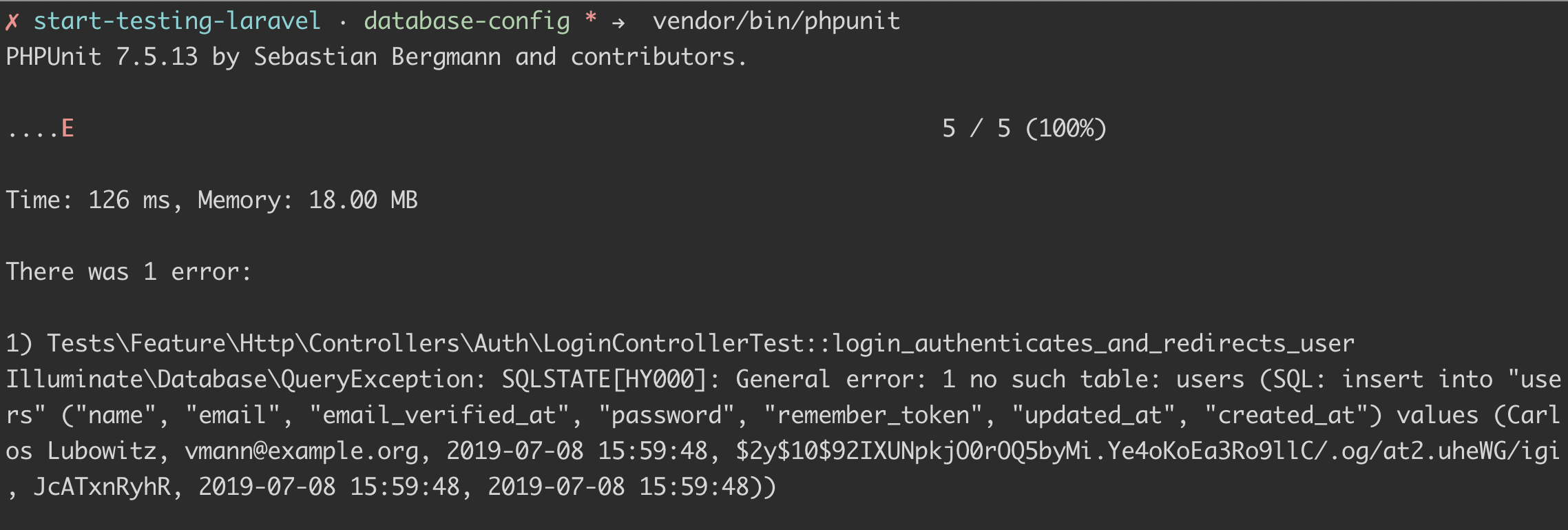

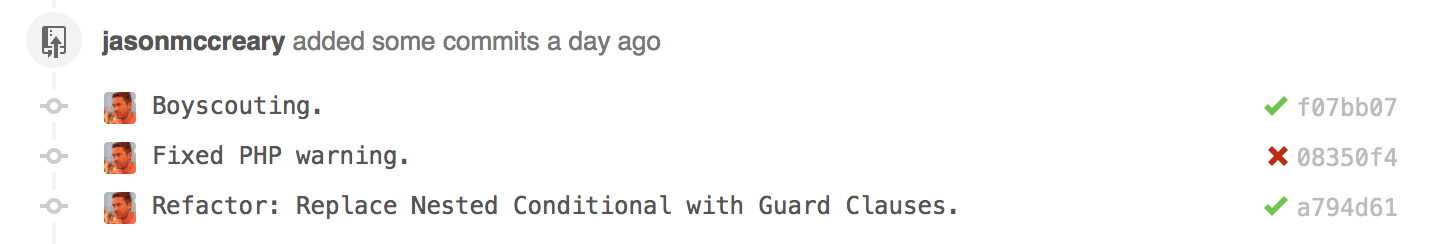

I created the Test Generator to generate tests for existing Laravel applications. This not only helped developers get started with writing tests, but also helped them to verify their upgrade. This complimented the upgrade process by giving them more confidence their application still ran as expected. All of which speeds up the maintenance process.

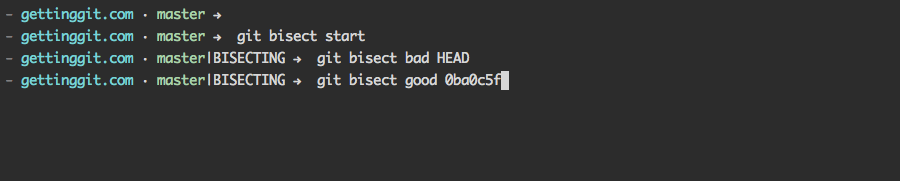

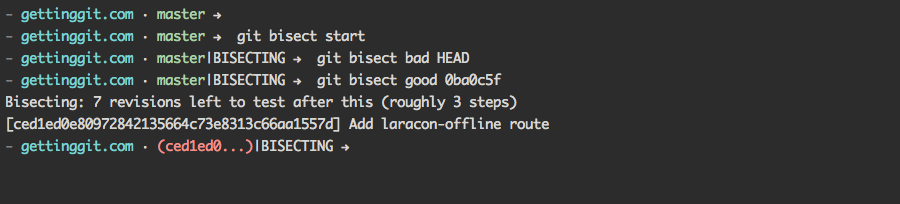

I also created the Shift Workbench. This took automation from the various Shifts and allowed them to be run individually as tasks. The cloud-based version released in May of 2020. With help from Jess Archer, we also released a desktop version (Electron) in July of 2021. The Workbench mainly focused on refactoring Laravel and PHP code.

Having a desktop app lowered the friction of signing into and interacting via the web UI. Instead developers could run automation directly on their local machines. This opens up all sorts of possibilities in the future. Eventually you'll have all the automation of Shift available conveniently from your desktop.

Finally, we recently created the CI Generator. This automatically generates CI for GitHub, Bitbucket, and GitLab. It scans your project to set up running your test suite and performing static analysis anytime you open a pull request. This further improves the experience when running other Shifts. Ideally leaving you with a successful build after the automated changes.

Sales from these don't necessarily move revenue as much as being in a different market. It does however diversify Shift. At least beyond strictly Laravel upgrades. Now Shift moves to maintaining Laravel projects. This helps widen the market of Laravel developers. It also further combats the seasonality around the Laravel releases.

This has proven timely as Laravel moved to an annual release cycle. In fact, there will not be a new version of Laravel released in 2021. Despite this, Shift revenue has continued to grow. Albeit not as much as previous years. But I'm optimistic for 2022.

I will continue to try to push Shift into additional markets. In fact, we did find a market which overlaps with Laravel – Tailwind. Tailwind was created by members of the Laravel community. So it's commonly used within Laravel applications. Making these Shifts appeal to much of the same market. While they don't get a lot of use currently, I do think they'll receive more use as Tailwind itself continues to grow.

Shift isn't sexy

Personal Notes

Laravel is a community of giants. Taylor himself reported making over $10,000,000. Adam made $2,500,000 from his courses and created Tailwind CSS. Caleb Porzio makes $100,000/year in sponsorships and created Alpine.js. These were just the posts I've read over the years. There are more giants in the Laravel community like Jeffrey Way with Laracasts, Jack McDade with Statamic, and David Hemphill with Nova. It's really incredible.

With all these giants around you, it's easy to feel small. Shift isn't a revolutionary framework. It isn't an invaluable learning resource. It isn't a fancy app. It isn't really even a piece of software. It is a tool to help you upgrade your code. By comparison, that doesn't seem that cool.

At times this made me insecure about Shift. With so many cool things to talk about, who wants to talk about upgrades. Furthermore, sometimes the Laravel upgrades are touted as only taking 15 minutes. Which seemed to diminish the need for Shift.

I often find myself asking members of the Laravel community to retweet announcements for Shift. To me, it feels like I asking for favors. I believe in merit. If something is good, people will use it. People will talk about. Asking people for retweets didn't make me feel like Shift was all that good.

Even though Shift was growing 40% year-over-year, I didn't feel like I had made something of value. I didn't feel like one of the giants around me. Despite jokes within the community, I didn't consider myself in the Laravel Elite.

By most measures I stagnated. Other peers within the community were growing Twitter followers exponentially. I barely broke 10k. Despite launching multiple educational products, speaking at Laracon, and creating Shift I didn't seem to have any popularity. On one hand, I could message Taylor or Adam and often get a reply. Yet other members of the community rarely responded. I often got ghosted when asking for advice.

I actually struggle with inclusion. It takes more for me to feel welcome or valued. Social media feeds off this. It was worse during Covid, where at times, it was my only point of contact. Normally I'd attend conferences and social events. In-person settings where I can glean some extra cues to feel included. Without this, there were days these feelings would creep in.

I joked with Jacob Bennett once by calling myself the garbageman. That's what I felt like. Someone who provided an essential service to the betterment of the community, but rarely recognized for their efforts. I appended a 🗑 on my Twitter handle to embrace the joke.

I've seen multiple projects within PHP dragged down by legacy code. WordPress is the goto example. I'd like to think Shift helps contribute to the freshness of Laravel. If so, I'm glad to be the garbageman picking up the trashy Laravel apps one-by-one.

Reaching a $1,000,000 in revenue has provided some validation towards my efforts. I did it mostly on my own. There's been no special treatment. Shift isn't listed in the Laravel docs. I don't have an inside track with the Laravel team. It's just me, grinding it out the past 6 years.

This is all part of being solopreneur. You just keep grinding. Grinding code. Grinding sales. Grinding support. Grinding internally. A constant grind. It's not easy. If it seems that way, you probably don't know the whole story or someone got real lucky.

Could be making a lot more

Business Insights

Bringing it back to revenue, Shift could be making at lot more. I could continue to raise prices. I could grow into in a real business. These are things I thought about over the years. Even battled with at times. I don't plan on doing either of them.

I'm happy with the tiered pricing. I could increase the price of the tiers. I could easily charge 3x-5x more. In doing so, Shift might lose 20% of customers. Working out that math, Shift would still increase revenue by 3x.

I don't think I'll ever shake my "keep the cost low so everyone uses it" mentality. I do think I may drop the lowest price point. $9 for the latest version upgrade is somewhat nostalgic at this point. So I'll keep it as an introductory price. Offering it for a limited time after launch. Then raise it to the $19 price tier. Especially with Laravel's new annual release cycle.

This also reminds me of something Sebastian Schlien said about how early adopters are your most loyal customers. They are going to buy it regardless. Giving them a discount leaves money on the table. Since I'm already leaving so much money on the table by keeping Shifts prices low, I don't want to leave even more.

I could also hire someone in order to move into new markets. Hiring someone who meets the criteria above the would require offering a competitive salary. That would eat into roughly a third of Shift's current annual revenue. I do not expect an employee pays for themselves right away. An employee is a long-term investment. Eventually they add capacity and ultimately a return on their investment. I still don't look at Shift from the long view. So it's hard for me to see the value of an employee. That may be short-sighted.

Another angle would be to partner. Maybe through some kind of profit share. I'm more open to this. Yet it's not without similar challenges. Again, it would need to be competitive. Do they get a portion of the existing Shift revenue. Only joint ventures? What's the percentage? Do they vest over time? What is fair for both sides.

Ultimately, I don't want the stress. I have no desire to grow the business from the perspective of employees. I decided long ago - I am not a manager. I remember meeting with my boss at one of my first jobs. He said someday I'd have to choose between being a specialist or a manager. Even then I knew I wanted the specialist path. However, multiple tries as a Team Lead proved it. I'm just not good at managing humans. My expectations are too high. I have a strong work ethic. I'm not always comfortable transferring responsibility to someone else.

For these reasons, Shift will stay a solo venture. In fairness, Jess is on a monthly retainer. It's only for the hours she wants to spend. She's also moved into a partner role on some of the recent developments, like the Workbench desktop app. I am also contracting another developer for a few hours a month. I don't see either of them becoming employees. That's as much their choice as mine.

I also noted in the intro that I could have made more as a well-paid software engineer. $1,000,000 over 6 years is roughly $167,000 a year. That's a good salary where I live. It would take some time to achieve. Compared to revenue from Shift, a salary would made more in 2016, 2017, 2018, and 2019. 4 of the 6 years I'd been working on Shift.

In fairness, I didn't go full-time on Shift until 2019. That year, Shift made $126,000. Again, a good salary, but not more than a job. Yet in 2020, Shift made $267,000. Over a 2x increase. I expect another similar increase in 2022 with the release of Laravel 9.

At this point, Shift makes far more than I did at previous job. Maybe not more than all jobs I could have. However, this focuses only on the money. Shift affords me far more benefits than money.

Whatever, I do what I want

Personal Notes

In the end, I am a Company of One. As such there isn't a lot of pressure for me to optimize or even grow Shift beyond what I can manage. Of course I take opportunities to optimize the Shift codebase and provide the best service. But from a business standpoint, I'm likely not managing Shift to its fullest potential.

I'm okay with that. I'm not a greedy person. Money is a means to an end for me. It's an unfortunate convenience of the world we live in. Money allows me to take better care of my family and myself. Money makes it easier for me to do the things I want to do.

Keeping Shift small gives me more freedom and less stress. I am in control of Shift's direction. I can take on new work or not. I can work more hours or spend more time with my family. Maybe I am leaving 3x revenue on the table. To me, that's an acceptable tradeoff. It won't keep me up at night.

What matters more to me is recognition. Being seen as a value-add within the community. When I question that is when the negative thoughts creep in. It's not so much the responses or retweets themselves. It's more about the recognition they convey.

Remembering the freedom I have helps. Really it's all about achieving freedom. And while it may have been slower or lesser compared to those around me, I achieved it nonetheless.

What I'm doing with the money

Personal Notes

The unfortunate truth is $1,000,000 really isn't that much anymore. I realize that's a bit ridiculous to say. Possibly unfair. $1,000,000 can be life-changing. But you have to be smart with the money.

I'm a frugal person by nature. Dare I say minimalist. I don't really buy much. You'll often find me wearing jeans and conference t-shirt. I have a late 2018 MacBook Pro. For many years I didn't have a car. When I do own a car, I buy used. About the only thing I'll splurge on is food and travel. That's how I treat myself.

I have a waterfall approach with personal finance. These are strategies I picked up from family, books, and podcasts. At a high level, I work towards the following goals:

- Build savings

- Payoff debts

- Real estate investment

- Payoff mortgage

- Wealth investments

- Retirement fund

As a software engineer I was able to earn a good salary. This allowed me to build my savings. I like to save enough to cover 6 months of expenses. This is my minimum for moving to the next goal. As I continue on to additional goals, I may build my savings to cover 1-2 years worth of expenses.

Once I build my savings, I start paying off any debts. These might be student loans or car loans. Given my frugal nature, I didn't have much debt. Really only my monthly credit card amount. Which I pay in full.

Once the debts are paid off, I try not to take on any debt again. Maybe if there's some promotion with 0% financing. Then I may do it. But I make sure to have the extra savings to pay the amount off in full before the term ends.

Next, I look to invest in real estate. In the US, this means buying a home. I was able to purchase my first home before the 2008 financial crisis. Several years ago, I sold that home to move in with Ashley. We lived together to save for a new home. I actually paid Ashley rent which she put towards her student loans. The combination allowed us to purchase a new home together in a residential area of Louisville where we had both grown up. We took out a mortgage to do this.

During my consulting contract, I focused on building savings to cover a year worth of expenses. Ashely and I also worked together to pay off our home mortgage. This is one of the reasons I decided to renew my contract instead of go full-time on Shift sooner. I couldn't lose that higher income and maintain my current financial goals. With the additional salary, extra income from Shift, and Ashley's help we were able to pay off our mortgage the following year.

Once Shift started replacing my salary in 2019, I took more of this money and put it into financial investments. I have invested in the stock market since I was 18. Any extra money I made from side work or iOS apps I'd put into stocks. I can't say I've made much money in the stock market. Mostly because I made all the common mistakes of any first time investor. While I have learned the hard way, I am more experienced now. I've seen much better returns in recent years.

Investing in the stock market is something I am comfortable with. I believe it's one of the ways to make money with money. Over a long enough timeline, the stock market can generate wealth. It's not for everyone. Ashley is actually more comfortable investing in real estate. In fact, when she replenished her savings, we invested in a rental property.

These investments are about the future. They're about taking money we have today and turning into more money tomorrow. Ideally with little or no effort. A way of putting your money to work. The investments can be whatever you're comfortable with. For example, cryptocurrency. I have a little in crypto. But overall, probably less than 10% of my investments.

The final tier I am working towards now is a retirement fund. As a solopreneur, I don't have a traditional 401(k). There is an opportunity for me to do a simplified employee pension (SEP). I personally don't prefer these retirement plans. Unless a company offers a match program, then I will invest. Generally though, despite the tax advantage, I don't like the idea of waiting until I'm old to access that money. Instead, I plan to create my own retirement investment fund using future earnings from Shift.

Ashley and I will both contribute to this fund. The goal will be to grow this fund enough to where its returns replace one of our salaries. Maybe in 10 years it might replace both our salaries. This would bridge the gap until our traditional retirement funds mature. Since we have no debt, good savings, and other investments, this could be enough to live as we do now. Although, now with Isabella, I will have to factor in the cost of raising a family. I also want to begin a separate financial plan for her.

Again, this is not financial advice. I'm sharing how I have tried to put this money to work for me. Personal finance is not something which is talked about often. But it's important. Especially in the tech industry where salaries may be higher and money needs be managed. Hopefully sharing my goals help you to make your own.

Exit Strategy

Personal Notes

Shift will likely be the last project of my programming career. I am approaching 40. For each year that passes, it becomes less and less likely I could return to a day job. It's more likely I would move to something in a different space entirely. Maybe day trading. Maybe woodworking. Maybe I'll open a local co-working space.

Shift probably has a few years before its decline. This would probably coincide with Laravel's decline. Which is probably even farther out. If that were the case, I'd create automation to migrate a project from Laravel to whatever the next thing is. Then call it done.

Again, all speculation. In the meantime, Shift will remain my focus. I still enjoy tweaking the automation. I enjoy meeting fellow Laravel developers and helping them upgrade their successful (albeit old) Laravel applications. Most importantly, I enjoy being part of the Laravel community. I hope I have added value with Shift.

Thanks for reading and keep shifting!

]]>